Prologue. Becoming a Hacker

“Stop clicking . . . stop beeping." —my sister when I was 15 or so

In 1982, Time magazine named the personal computer Machine of the Year, marking the first time a non-human was awarded Man of the Year. It was a fascinating read, but like many nerdy kids across the country at the time, I’d already become captivated by computers.

My best friend Dave Crotty and our other best friend, Neal Fordham (collectively, the three of us were known as the boys), spent the previous year making mixtapes of ’80s punk and new wave on Dave’s father’s Bang & Olufsen component system.

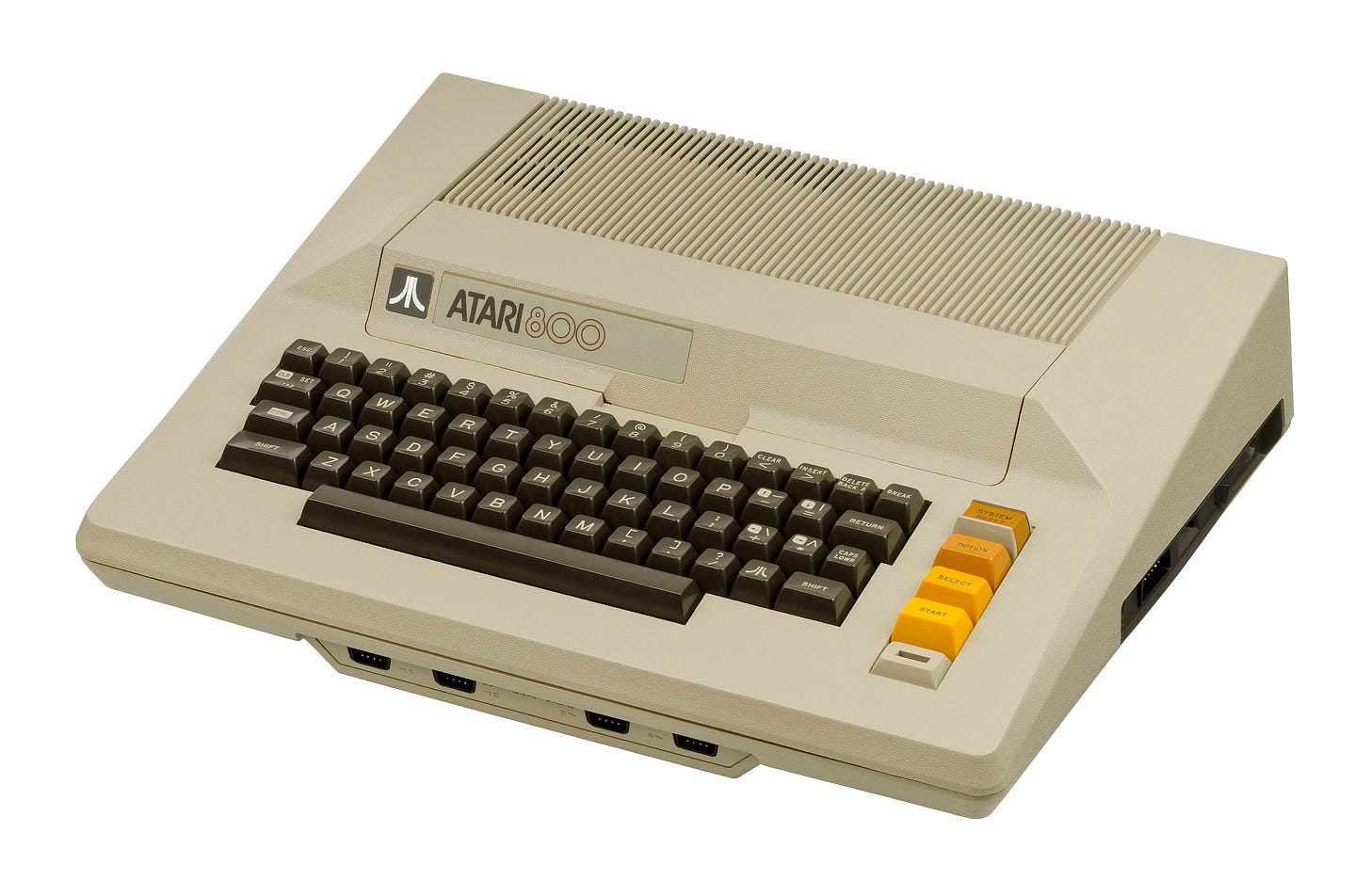

When Dave’s brother Kevin got an Atari 800 computer, my curiosity piqued. I was mesmerized by this new machine—not by the video games I could play on it but by the presence of BASIC, the first programming language experienced by most everyone in the early days of personal computing. BASIC was thanks in no small part to Bill Gates and Paul Allen and their start-up originally known as Micro-Soft.

I gave up Space Invaders for rows of numbered lines. The timing turned out to be great.

Our family business was a retail store in Orlando, Florida. My Saturdays were spent calculating sales tax, doing inventory, and making change while chatting with customers.

Dave’s Atari gave me an opportunity to create my first program:

10 PRINT "Amount of sale?”

20 INPUT sale

30 LET tax = sale*.04

40 PRINT "Merchandise: ", sale

50 PRINT "Tax: ", tax

60 PRINT "Total: ", sale+tax

70 GOTO 10

As the family business evolved, my father, David, realized that turning it into a wholesaler was a great opportunity for the family. He decided to buy a computer to run it. I have no idea where the motivation for this came from and certainly knew the expense was significant ($1,800 then or about $5,000 in 2020 dollars). While our family had been early adopters (to some degree) of many modern household items—we had a fancy 35mm camera, a microwave, a Betamax, and even a big-screen TV—a computer, however, was puzzling. It was also an enormous privilege. Rather than a “toy” computer, of which the Apple ][, Atari, and the new Commodore 64K (64K!) were viewed at the time by those who claimed to know, my father invested in a business computer. He went to a computer store, staffed by people in suits and ties, and bought one of the earliest Osborne I computers.

The Osborne was a remarkable machine at the time and in the history of the personal computer. A nearly 30-pound “portable” (it didn’t even have a battery as portable meant you could relocate it) described as “the size of a sewing machine,” it had a 5-inch CRT screen that wasn’t large enough for a full 80 characters across, so using the CTRL key and arrows that panned the screen would allow someone to see the rest of it. It came with two 90K 5.25-inch floppy drives and 64K of memory. It ran the CP/M operating system (Control Program/Monitor), which at the time was vying to become the de facto standard.

It came with a bundle of “free” business software, including the WordStar word processor, the SuperCalc spreadsheet, a copy of the remarkable VisiCalc on the Apple ][, and two (!) different BASIC languages, MBASIC, which I later learned was Microsoft BASIC, and a faster variant, CBASIC. Notably, a “database” called dBase II was promised but did not arrive until later (“real soon,” the dealer told us).

Magazines were the early fountain of knowledge about the new computer because computers were not connected to anything else or any other computers. The monthly Portable Companion, the first issue, free with the computer, was filled with tips and tricks for using the Osborne and the bundled software. I dutifully filled out reader response cards and soon had a library of code samples I could type in and printer configuration codes. I read Dr. Dobb’s and BYTE at B. Dalton Bookseller in the mall instead of playing games.

I set up the computer in the tiny extra room that served as the TV room for my sister and me, much to her chagrin. The noise created by the combination of typing on the full travel keyboard and the constant grinding and clacking of the floppy disk drives, not to mention the loud beep at power-on and whirring fan, took a toll on my younger sister, Jill. Through our lightly constructed 1970s Florida ranch house, I heard her repeatedly whine, “Stop clicking . . . stop beeping.”

I was undeterred.

My father and I spoke twice about the computer. The first time was when we bought the computer for the business and I was left to figure out how to “put it to work,” whatever that meant in 1981. Second, after a few months, when I was not making enough progress, he basically said he was firing me and he was going to hire a professional, whatever that meant. But that second conversation lit a fire under me.

I spent a month or two using CBASIC to build an inventory program for the wholesaler. I had no idea how a database worked, what a database table was, or anything like that. There were enough example programs for managing “lists” in CBASIC for me to figure out how to modify them.

Probably just in time for my father’s loss of patience with me, I was rescued by the delivery of disks and manual for dBase II. After a few hours of using it and going through the typewritten photocopied documentation that came with it, a whole new world opened up for me. I immediately began building an entire system for the business.

A tribute to the power of dBase II more than to any skill I had, it took only a few weeks to get accounts, inventory, payables, and invoicing up and running. My father was relieved. I began the job of manually inputting the names and addresses of hundreds of customers and thousands of products.

To store all the data that did not fit on a 90K floppy, I spent weeks evaluating a 10-megabyte hard drive to add to the second Osborne bought for the business (one remained at home for me to program and the other ran the business). The 10-megabyte drive was the size of our Betamax and sounded like a small aircraft, but it dramatically changed how the business could be run. Imagine something like 100 floppies running all at once. It was magic. And it was fast!

Along with dBase II, the “300 baud modem” that promised to unlock the world of connecting to other computers over telephone lines was also delayed. When it finally arrived, I added a new sound to the clicking and clacking, the audible modem handshake that later came to symbolize “online.”

At first, there wasn’t much to dial-up except expensive per-minute professional services that were out of my price range and required a credit card I did not have. After a lucky meeting at the local CP/M User Group (CPMUG, as it was called) where I was the youngest by at least 10 years and the only person there not (yet) working at Martin Marietta or Kennedy Space Center, I learned about FIDONet.

I was finally online. And then I was online all the time (using the second home phone line I received for my Bar Mitzvah).

That connected me to user groups, forums, and others writing and exchanging programs. I felt like I was on a new learning curve as every night led to another discovery. Sometimes I learned the arcane aspects of CP/M, such as how to edit the OS code to disable the File Delete command (to make using the computer safer for my father) or to customize WordStar for our printer so it would print “double wide” characters for fancy headings. Other times, I learned some sophisticated dBase II constructs like keeping multiple tables connected and in sync for reporting. It was also in an online forum that I learned about the IBM PC and how it was going to be the winner between it, CP/M, TRS-80, and Apple Computer, the other ever-present computer systems.

So much was changing in such a short amount of time. That year fewer than two million PCs built by dozens of companies were sold, each computer running different and incompatible software, as if early automobiles needed different roads for each car maker. A year earlier, IBM introduced the IBM PC and was welcomed to the PC Revolution by five-year-old Apple Computer in a full-page advertisement in the Wall Street Journal.

It was early in the PC Revolution.

Cornell University’s computer science program, one of the first in the country, started in 1965, the year I was born. As 1982 wound down, I was admitted to Cornell.

Prompted by that Time article, my mother, Marsha, told me that computers were a fine hobby, but she reminded me that I wanted to be a doctor. I received a good talking to once she read the descriptions of “hacker” culture—flannel shirts, no shoes, and working late at night in the solitary computer room of the nation’s colleges. It all sounded too close to late-night beeping and clicking. She wanted assurance that I was attending Cornell to study something more in line with what was expected, what I wanted. She was concerned that I might become a “hacker.”

Too late.

On to 001. Becoming a Microsoftie (Chapter I)